Charlotte Maugham and Sandy Oliver | 20th December, 2021

This blog was first published on the From Poverty to Power blog

There are two powerful trends playing out in the development and humanitarian world: the push to make better use of research evidence to produce viable policy options, and the localisation agenda. The two are sometimes treated as mutually exclusive – “I mistrust any decision made without reference to critically appraised evidence.” vs “Ah, but my operating context is so unique that only knowledge held within the boundaries of this village has any applicability for my decisions.”

This soaring divide is driven in part by realities of context but also by unchallenged wisdom within our comfortable siloes, and by our own proclivities.

The truth is – whatever we are most comfortable with, we should probably all be doing a bit more of the other. There are very few contexts where we can’t apply some form of generalisable evidence (systematic review, meta-analysis etc.). And even fewer where we can’t learn with and from those people closest to the problem about how our attempts to solve it might be improved.

It is a truth (almost) universally acknowledged that evidence of any kind rarely provides certainty. But getting the balance right between methodological rigour and local knowledge can boost the overall usability of evidence – so we can confidently inform decisions, while acknowledging uncertainty. This is particularly true when we want to use evidence to speak truth to power. The influence of evidence can only ever be as strong as our understanding of how it might be received… and vice versa.

So where do we start in getting this balance right?

Over the last two years, a group of academics, evaluators, and development and humanitarian practitioners have developed a toolkit that helps to identify the most appropriate mix of methods for engaging stakeholders with evidence in any intervention. It all starts with pushing past our own prejudices. And to do this, we first have to recognise them as such!

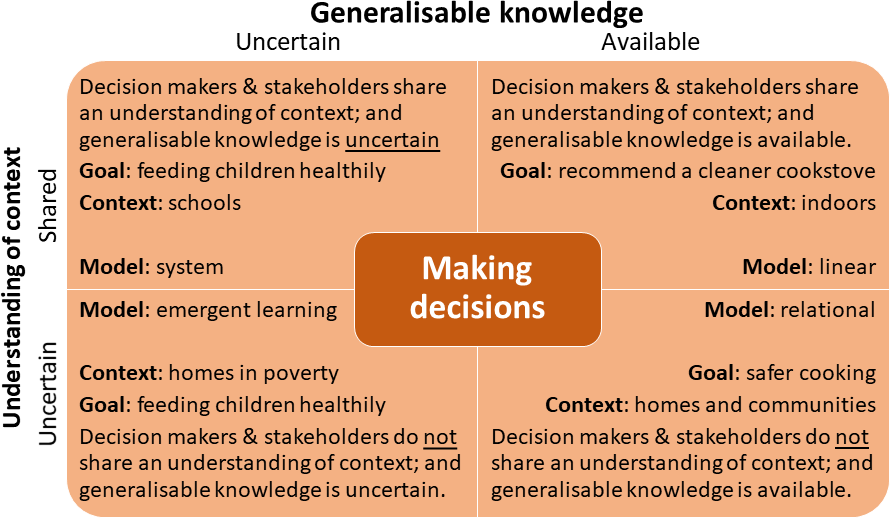

When interviewing practitioners we found this 2×2 matrix (figure 1), inspired by Duncan Green, helpful for exploring some of the hidden dynamics in how different types of evidence are used and exchanged.

The matrix works by helping us to situate decision-making in relation to:

- Availability of generalisable evidence; and

- Understanding among stakeholders of the operational context .

Doing so can reveal deep-held (and often ill-informed) preferences for particular forms of evidence. It also goes some way towards explaining why these preferences might be holding us back… and how we might get a better balance.

To illustrate all this we’ve populated the matrix using two real-world examples related to clean cookstoves and child nutrition.

Example 1: Cookstoves: from laboratories and epidemiology to home cooking

Matrix Top Right: Linear model of knowledge to action (Knowledge Transfer)

Generalisable evidence from engineering and physiology convinced a small number of practitioners, policymakers, donors, academics and business networks that cooking indoors with solid fuel pollutes the air, harms the lungs and increases mortality. Working with WHO, this group recommended promoting the use of better stoves with cleaner fuels.

Matrix Bottom Right: Relational model of knowledge to action (Knowledge Exchange)

But… despite promotion of evidence linking solid fuels to poor health – campaigns did not result in widespread adoption of clean cookstoves. So, WHO brought together research and policy experts from within health, engineering, air pollution and economics to share what they knew and develop guidance to influence cookstove markets, communities and homes.One “radical” and effective solution to this problem saw engineers and anthropologists working with household cooks to redesign, test and set standards for stoves that would appeal to home cooks.

So what? For those of us that prize the rigours of the generalisable evidence base (sometimes above all else) – this example acts as an important caution. The linear model for encouraging the uptake of such evidence is all well and good when the context is well understood… but bring uncertainty into the picture (which let’s be honest applies to most development interventions) and this model quickly breaks down. By contrast, the relational model, in which we encourage dialogue between different types of stakeholders is more effective.

Example 2: Children’s healthy eating: in the school system and at home

So far, none of this is ground-breaking – by now we’re thankfully all well-versed in the “context matters” narrative. But it does serve as an important reminder that making sense of generalisable evidence in any given context means giving equal value to other types of evidence – tacit know-how, lived experience etc.

The left-hand side of the matrix is where things start to get really interesting…

Matrix Top Left: System model of knowledge to action

With the best will in the world, generalisable evidence is sometimes thin on the ground. In some situations, while the context may be familiar and well understood, relevant studies may be lacking or offer uncertain findings.

This has been the case for leaders in the education system seeking to support children’s healthy eating in schools. While these leaders have sound understanding of, and influence within, the schools system, they have found the evidence from trials of school feeding programmes varied. In this situation, the most effective solutions have involved developing programmes with local teams rather than experts from elsewhere and possibly with local ingredients and cooking methods.

Matrix Bottom Left: Emergent model of knowledge to action (Knowledge Mobilisation or Co-production)

More challenging still, is when understanding of the context is also lacking! This has been the case for decision makers whose goal is for children (particularly those living in poverty) to eat healthily at home. A child’s home is a context where these leaders have little understanding, and exert little influence.

In this type of situation, it makes sense to turn to the people holding the relevant knowledge: those feeding their children. Here the engagement method of choice is to learn from poor families whose children are well nourished and to share that learning with families whose children are less well nourished.

So what? Without shared understanding of either generalisable evidence or the social system, the first step is asking around until useful evidence begins to emerge.

Cultural norms and politics: working with alliances

Making decisions with evidence doesn’t happen in a vacuum. Returning to the cookstoves story, the sustainable technology NGO, Practical Action, also recommends a more politically aware approach. This approach recognises how social norms hinder women from participating in energy markets in personal, technical or leadership roles. It values the political will for setting ambitious national targets. It recommends coordinating the efforts of industry associations, civil society forums and consumers, particularly women, to activate the market; and working with finance institutions to develop business models more suited to women and the poorest households.

So what? Using research evidence is not only a technical exercise, but also a social endeavour that benefits from some political awareness to anticipate challenges and develop alliances.

Where to turn when engaging with evidence?

Working through the 2×2 in relation to your own work on any particular project, you’ll probably find you don’t fit neatly into one square. But there will likely be one or two that align more closely with your situation than the others… and you might be surprised at which! Just getting to this stage can help build your trust in the relevance of different forms of evidence, while also helping you to plan which models for engaging stakeholders with evidence make most sense.

Now you know which models might work best – our toolkit can help you locate appropriate methods for engaging stakeholders with evidence. It is intentionally live and we are always searching for more methods to add – so do get in touch.

To read more about the framework and the methods for stakeholder engagement, read the CEDIL Methods Working Paper and the CEDIL Methods Brief on this topic.

Charlotte Maugham is a Principal Consultant at DAI and Sandy Oliver is a Professor of Public Policy at the University College London Social Research Institute.

Photo credits: UN Women/Ryan Brown and Russell Watkins/Department for International Development

Hi Charlotte and Sandy.

1. I would be interested to hear your views on the similarities and differences between your matrix and that of Ralph Stacey ,and then Helen Zimmerman’s version, which I have discussed here some years ago: http://mandenews.blogspot.com/2010/08/test3.html

2. It is good to see a framework like yours populated by examples that are then discussed. It helps ground complexity type discussions that can otherwise remain in the realm of abstraction

with thanks, rick