Marcella Vigneri | 5 July 2021

Complexity is an unavoidable trait of society and human behaviour. It has been researched and modelled across disciplines in the social and natural sciences to understand how best to tackle it. CEDIL has made the unpacking of complexity in international development interventions one of its core research themes. As part of this undertaking, the Centre organised a public webinar to discuss current evaluation methods and best practices in addressing complex interventions. In addressing the topic, the speakers discussed various examples and typologies in international development and drew on learning from other fields, such as medical research.

‘Complexity’ is a word that is bandied around in international development. There are those who argue that the world is too complex and therefore evaluation is not possible. But as the discussion in this webinar revealed, there are plenty of methods that researchers could be using to examine and unpack complexity.

Speaking about a CEDIL study currently in production, Edoardo Masset gave an overview of available methods, offering several suggestions on how to navigate the methods’ toolbox to address the causality of different types of complexity in international development.

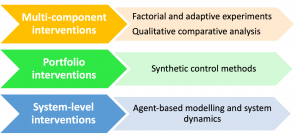

Complex interventions encompass programmes with multi-component and multiple interacting parts, where interactions between these elements generate complex outcomes. The international development programmes that fall under this definition can be grouped in three categories: i. multi-component interventions, such as BRAC programme on graduating from ultra-poverty, which feature many interacting activities that create combined effects, ii. portfolio interventions which are typically large-scale, long-term programmes run across many countries (or programmes targeting multiple sectors within a country) such as the President’s Malaria Initiative; and iii. system-level interventions where different programme components intend to change the ‘system’ (e.g. education, health, agricultural productivity) as illustrated by the Making markets work for the poor programme.

The upcoming CEDIL paper suggests a simple yet effective approach to navigate and choose methods most appropriate for different types of complexity in international development programmes: match types of projects to specific methods based on the feature of complexity that needs unpacking. This mapping exercise should prompt researchers to reflect on the pros and cons of each suggested method.

Factorial designs, for example, help in detecting interactive effects and synergies. Adaptive trials (still underutilised in international development evaluations) identify changes occurring at the design stage, enabling the update and adaptation of the hypothesis being tested, the data to be collected, and where needed even the treatment group. Qualitative Comparative Analysis (QCA) is useful for understanding the combination of programme activities that are most likely to result in the intended impact of the intervention. Synthetic control methods use an intuitive graphical representation to show causal impacts and are best used to detect large size effects in interventions observed over a long period of time. Agent based modelling/system dynamics, though with limited predictive power, are suitable for simulating impact by modelling complexity either as feedback loops or by modelling the interactions of agents.

How much complexity is relevant to the evaluation question?

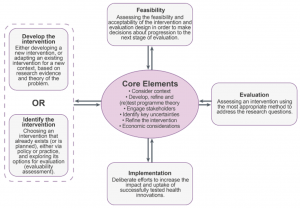

Peter Craig of the MRC/CSO Social & Public Health Sciences Unit at Glasgow University, and one of the authors of the new forthcoming Framework for the development and evaluation of complex interventions: gap analysis, workshop and consultation-informed update, discussed how the motivation for updating the Medical Research Council (MRC) guidance emerged from the need to shift the focus of research from complicated interventions to the analysis of events in complex systems.

Source: Skivington et al. 2021

The most recent, 2020 Medical Research Council updated framework stresses the need to invest heavily in revisiting the core elements of the evaluation (as shown in the pink oval, which include context, programme theory, stakeholder engagement among others) as researchers move through the four phases of the evaluation (shown in the outer quadrants) which were at the core of the earlier framework.

Evaluating complex programmes requires accounting for moving parts, and activities that are hard to deliver across contexts, so these need to be tested at the design stage to ensure that the evaluation will deliver knowledge and recommendations relevant in different economic, social, and cultural contexts. Tackling complexity requires being prepared to adapt the intervention as gaps and key, context specific uncertainties emerge.

How to deal with complexity in real-life evaluations.

Estelle Raimondo talked through the typical international development interventions evaluated in the World Bank Independent Evaluation Group (IEG): portfolio and system-level interventions, and interventions characterised by long causal chains where the reconstruction of the cause-effect nexus presents a real and complex challenge. Most interventions evaluated in IEG are ex-post, therefore there is no possibility of engaging in the design and implementation stages. They also have multiple layers of complexity at the system level, at the behavioural norms level, all the way down to the intervention level.

So, what are the best practices for unpacking complexity for these typologies of interventions? First, engaging with stakeholder groups and subnational governance is central for making sense of the complexity of stakeholders to develop relevant learning from evidence-based interventions. This was done, for example, in the IEG support to the improving child undernutrition and Its determinants programme where a mixed-method approach was used drawing from participatory, theory-based, and case-based evaluation principles. Second, making sense of complexity often entails doing three things: i. use machine learning to layer additional sources of data to improve the understanding of the intervention (for example using geospatial data to better understand the local context); ii. think through a combination of approaches (e.g. In-depth interviewing, rigorous story-telling, and QCA) and data sources (geospatial data) to deepen the understanding of the overall impact of a project; and iii. be ready to use different types of case-based approaches. Finally, taking a deep view of causal processes through case studies, combined with machine learning algorithms to identify patterns of how multiple components interact, is a practice that is used to complement more traditional impact evaluation methods.

CEDIL has also funded projects looking at complexity from different angles. This suggests that with a recipe in hand, preparing a meal is simple as long as we are prepared to recognise, acknowledge, and address the paradoxes inherent in complex systems.

Marcella Vigneri is Research Fellow in CEDIL’s research directorate.

[…] Centre for Excellence and Development Impact and Learning (CEDIL) publie une réflexion sur l’évaluation de programmes de développement international complexes ainsi qu’un dossier méthodologique explorant la complémentarité des approches […]